Human Computer Integration Lab

Computer Science Department, University of Chicago

Essays (long-form writings from our lab)

(Back to all essays)Essay #6: Our Lab at CHI 2026 by Prof. Pedro Lopes

April 28th, 2026

This April, our lab traveled to Barcelona to take part in ACM CHI 2026—the premier international conference on Human Factors in Computing Systems. Our presence at CHI spanned across 5 CHI papers, 2 demos, 1 poster, 1 panel (as speaker), 1 meetup (as organizers), and 2 workshop (as organizers). Below is a reflection on our time at CHI, what we presented, and what made this conference special for us.

TLDR: video recap

Our lab published five papers at ACM CHI 2026, you can watch all our papers in action in this video (shot in a single take).

Our paper presentations

Generative Muscle Stimulation:Providing Users with Physical Assistance by Constraining Multimodal-AI with Embodied Knowledge (Best Paper Award)

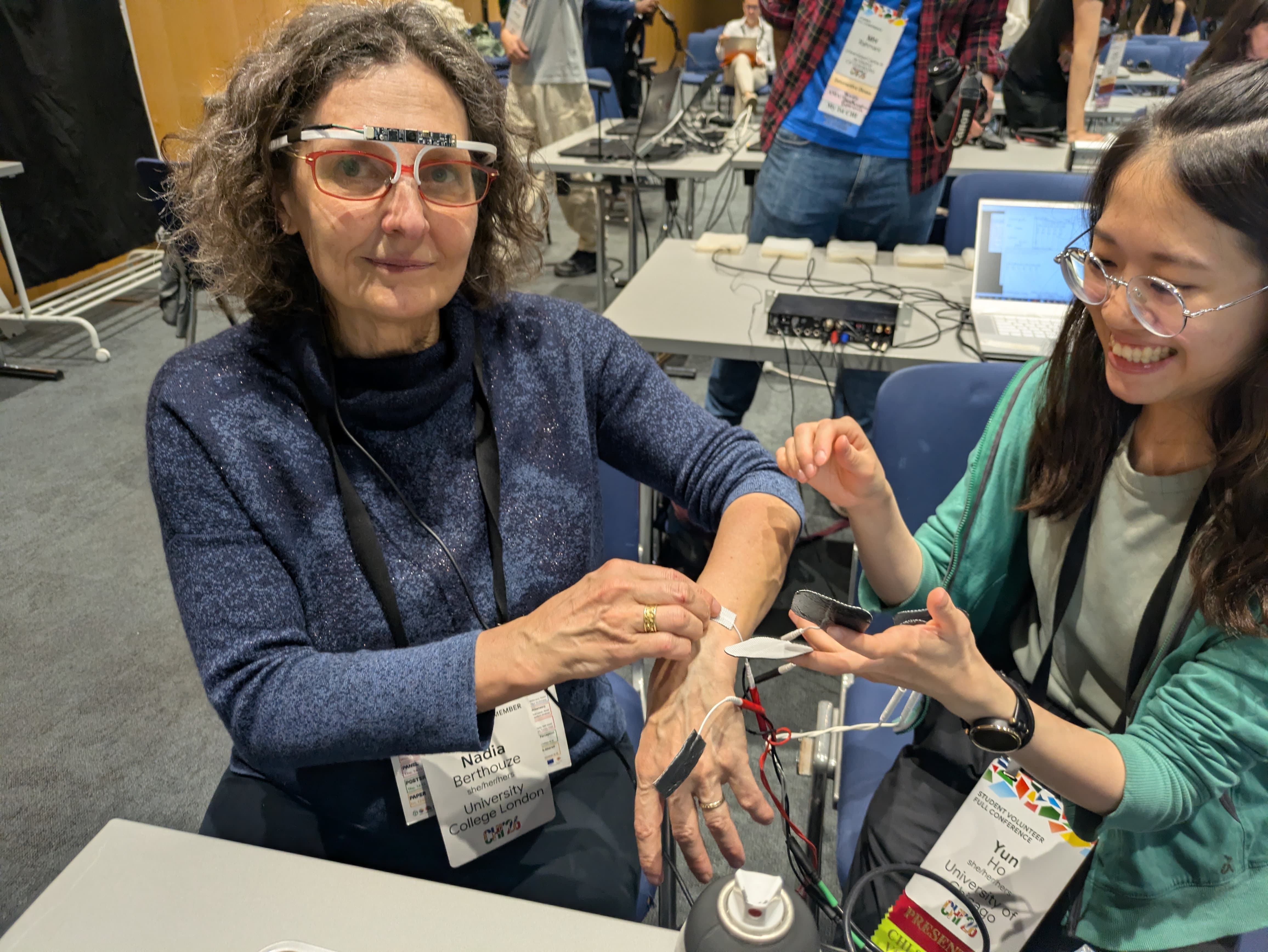

Authors: Yun Ho*, Romain Nith*, Peili Jiang, Steven He, Bruno Felalaga, Shan-Yuan Teng, Pedro Lopes (* equal contribution)

Paper link | Project page (e.g., code and more) | Demo video | CHI talk video

Key contribution: We propose a new form of embodied AI that assists users directly through muscle stimulation. The system dynamically generates assistance using multimodal inputs (body pose, vision, context), constrained by biomechanical rules. The key is that this is AI is not a chatbot, it acts via the user's body, assisting with physical tasks. In contrast to physical AI that is implemented via robotics, this is not a tool that externally automates tasks (e.g., do the dishes for the user) but that teaches the user's body how to perform tasks.

Conference story: Romain and Yun delivered a fun live demo during the talk, which you can watch below. They showed a moment where the AI system delivers physical movement instructions while holding a spray can in front of the CHI logo held by a student volunteer, in this case the AI system generated muscle instructions to shake the can prior to pressing the paint dispense plunger. Then, pointing the same can at croissants they got from the SV breakfast (Yun served as a student volunteer!), our AI system adapted seeing the contextual clues from the smart glasses and removed the shaking motion, treating it as food-safe usage, like spraying oil on food to cook. A striking demonstration of contextual embodied AI.

Myo Action: Accelerating Voluntary Actions

Authors: Yudai Tanaka, Che-Wei Hsu, Bruno Felalaga, Pedro Lopes

Paper link | Demo video | Talk video

Key contribution: This project follows our long line of work on physically assisting users while still preserving their sense of agency (this is our eight paper on this topic, you can read them all in our agency page). Unlike our previous projects that assumed we could predict the user's intention (which we cannot, that's a really hard challenge in the field), we turned to detecting already ongoing movements with EMG—this serves as a clear indicator the user wants to move their muscles, as the EMG signal is proof of this intention. Then it is based on this signal that we trigger muscle stimulation to assist the user. What is striking is that our device can accelerate users! This sounds paradoxical since the EMG EMG detects early neural signals and triggers EMS with ultra-low latency (290 μs), accelerating actions while preserving agency.

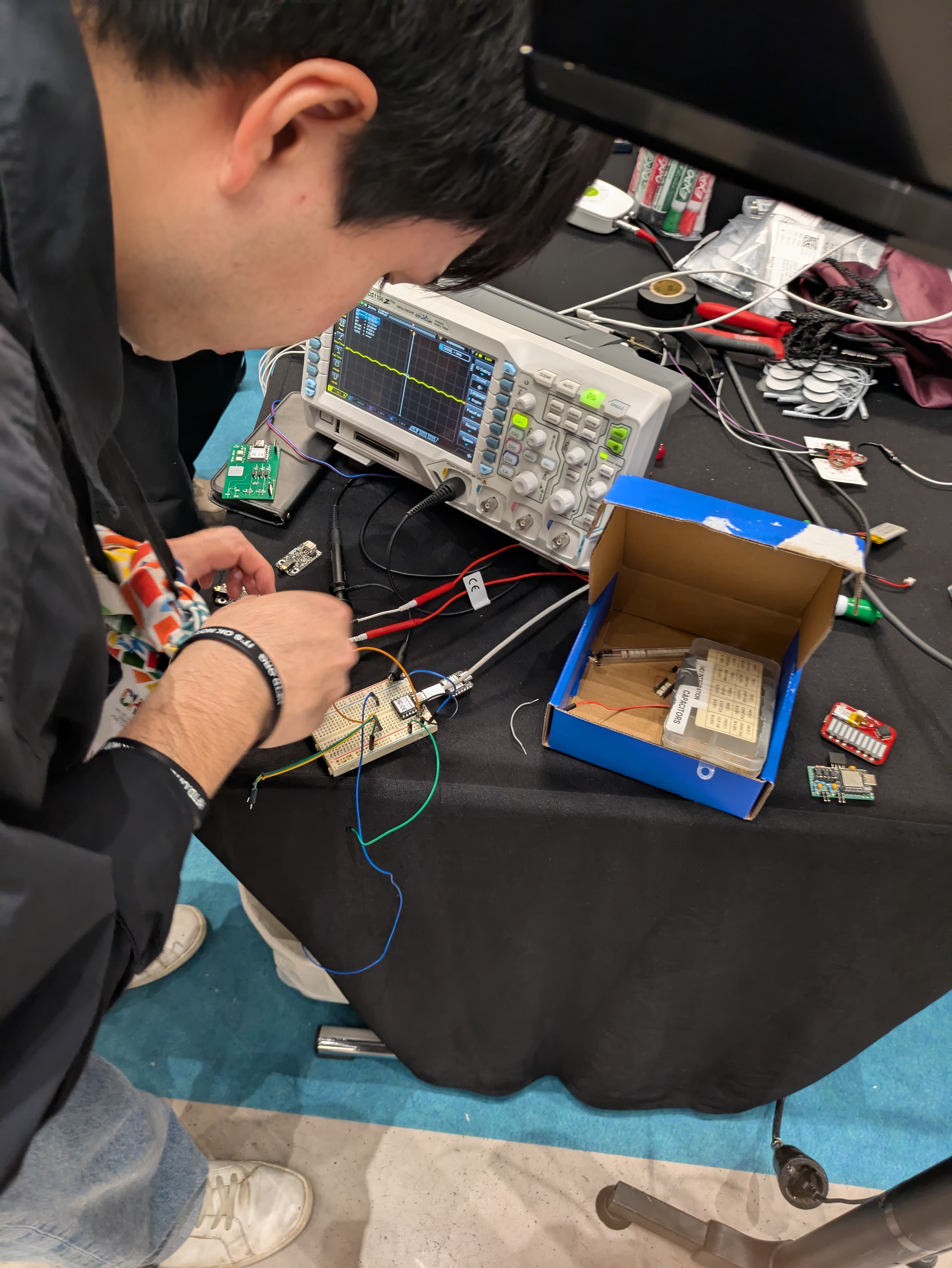

Conference story: Yudai presented the work and ran an interactive demo throughout the conference (as part of the Interactive Demos track). The EMG hardware had issues (likely circuit-related, our mistake!), but Yudai adapted on the fly and still delivered a fun experience for attendees!

Next Generation Haptics Should Balance Virtual & Real-world Fidelity

Authors: Shan-Yuan Teng, Yudai Tanaka, Alex Mazursky, Pedro Lopes

Key contribution:In this argument-based paper, we argue that our field must design haptic devices in a new way, one that balances the capabilities of the device in rendering virtual haptic effects against the capabilities of the haptic device in preserving real-world touch (not intefering with feeling the physical world).

Conference story: Shan-Yuan Teng, now a professor at NTU, returned to deliver a high-energy, argument-driven talk. A special moment for the lab, as it was the first time we saw Shan-Yuan since he graduated from our lab last year and we were able to see how he is paving his own way with his newly founded lab. Tip for any prospective students reading this: consider applying to the Dexterous interaction lab at NTU.

Modeling Perceived Force of EMS to Improve Recall

Authors: Mithil Guru, Romain Nith, Pedro Lopes

Key contribution: Our found that when EMS-interfaces demonstrate a force, participants trying to recall this force, overshoot significantly. This force mismatch renders EMS-interfaces unable to accurately demonstrate forces—drastically limiting the growing potential of EMS for HCI. To significantly improve on this, we modeled users' recall of EMS-demonstrated forces. This model allows to adjust EMS-interfaces to render a target force that, when recalled, matches the intended force best—in our study, this improved their force recall.

Conference story: This was not Mithil's first CHI paper despite him being a master student (he was a co-author in our AdaptiveEMS paper last CHI), however, it was his first CHI talk and he even included a small live demo. As he prepared for it, a USB-driver connection issue occurred, and Yudai quickly stepped in with a fix. The demo succeeded—showcasing teamwork under pressure!

Increasing Input Accuracy of Embodied-devices via Electrical Muscle Stimulation

Authors: Lonnie Chien, Yudai Tanaka, Noor Amin, Jas Brooks, Pedro Lopes

Paper link | Demo video | Talk video

Key contribution: We propose interaction-techniques to increase input accuracy with embodied-devices—an emergent type of interactive-system where the user’s body serves as both the input and output medium (e.g., gestural-input via cameras/IMUs; gestural-output via motors/muscle-stimulation). A critical shortcoming of existing embodied-devices is their failure to enforce alignment between the users’ proprioceptive-inputs and interface-state. Thus, we turn to muscle-stimulation to enable embodied-devices to: recall previous interface-states; provide confirmation-cues to signal state transitions; and constrain inputs to a valid range.

Conference story: This was Lonnie’s first CHI paper and talk, this was also sort-of our goodbye party with Lonnie has he just finished his PreDoc with us and is heading to Cornell Tech for his PhD—an exciting next step.

Interactive Demos and Posters

Myo Action Demo

Conference story: We were very excited to have Myo Action accepted as a demo to this CHI (for those who did not notice, CHI 2026's acceptance rate for demos was lower than for full papers—a very competitive demo program!). However, our dreams were slightly shattered by some faulty EMG signal boards, which kept working unreliably (would work on Yudai, then fail on Pedro, then fail on Yudai but work on Pedro!). Despite al these adversities, Yudai and Jerry stayed long hours fixing the boards, adjusting the demo to circunvent the unsurmountable technical glitches and, overall, try to keep the demo running and engaging throughout the conference. Kudos to Yudai and Jerry!

P.s.: Funny story this was also Jerry's (our undergraduate summer intern last year) graduation ceremony at CHI, as you can see in the photo below. Now he is starting his master's at NTU!

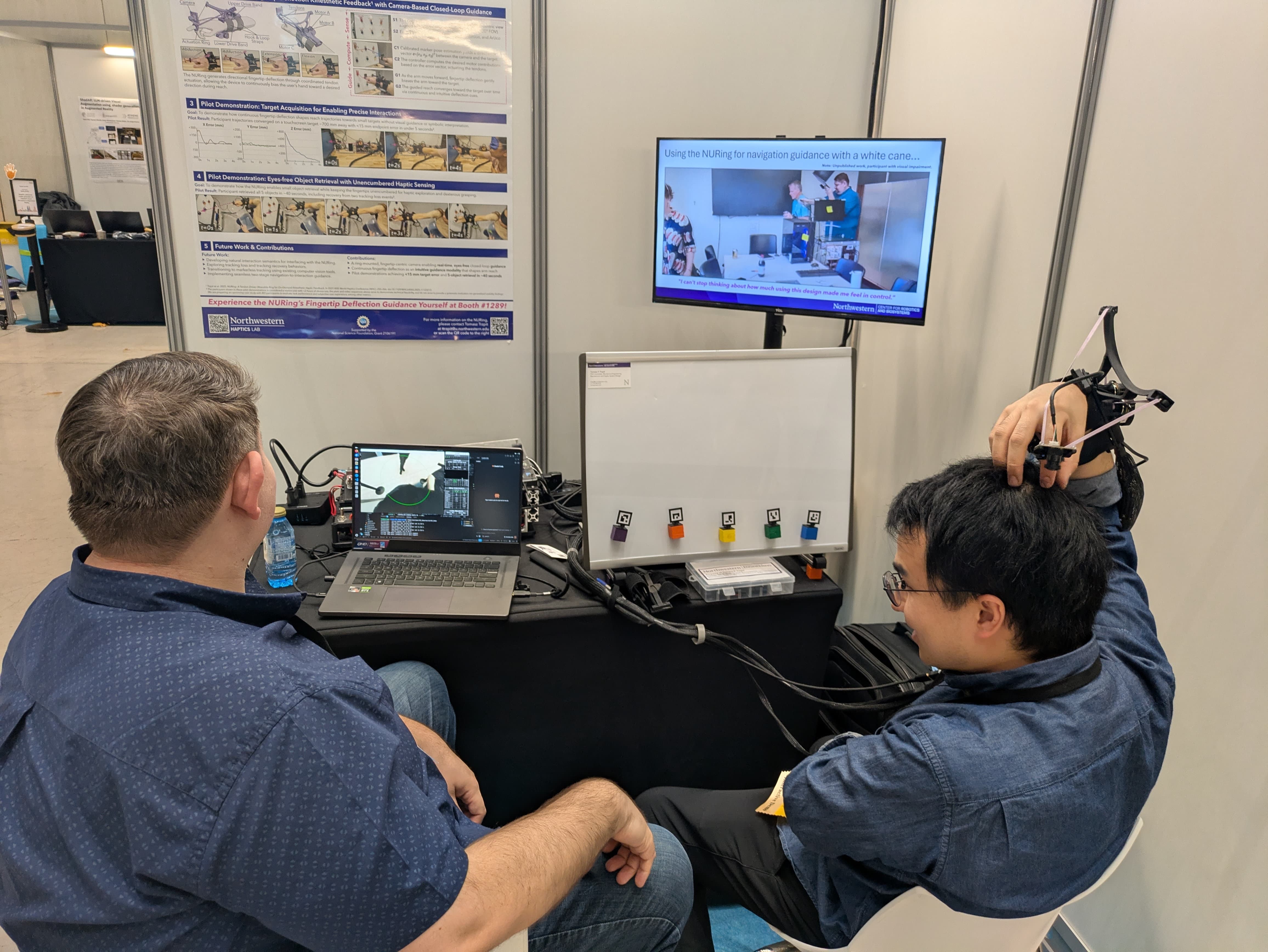

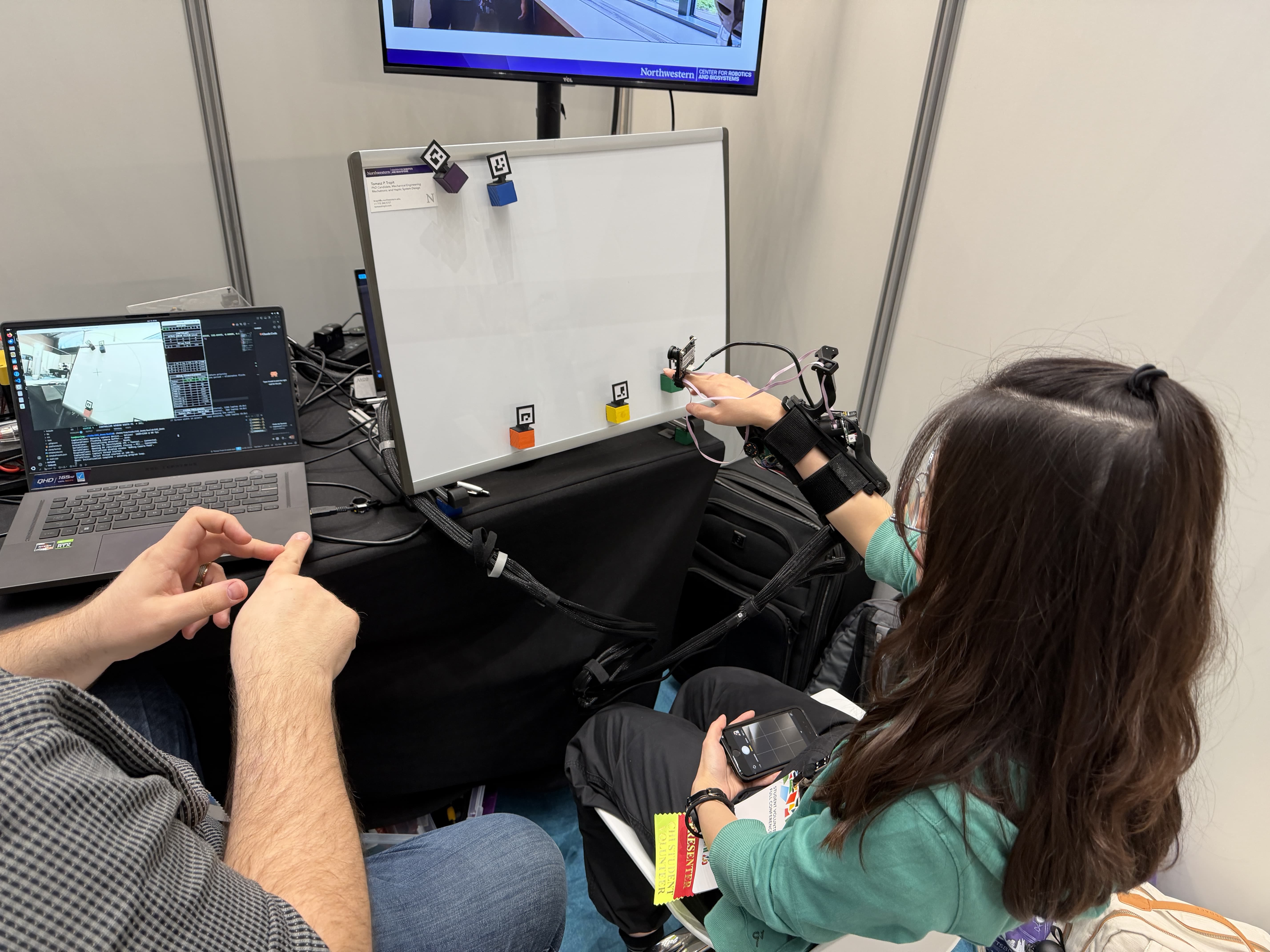

Demonstrating Eyes-Free Object Retrieval via Fingertip Deflection Guidance Using the NURing

by Tomasz P Trzpit, Gregory Reardon, Elizabeth Gerber, Pedro Lopes, Michael Peshkin, J. Edward Colgate

This was a project led by Tomasz P Trzpit from Ed Colgate's lab at Northwestern University, in which Tomasz and co-authors engineered a novel ring-based haptic device capable of eyes-free guidance! Unlike prior works that rely on symbolic cues requiring interpretation (potentially increasing cognitive load and competing with existing sensory channels), the NURing uses ingertip deflection as a continuous, physically-intuitive guidance cue that gently biases the arm during reach.

Conference story: A collaboration with Ed’s lab and Tomasz's first CHI experience, which had it all: a great demo, that got damaged during travel by luggage mishandling by the hotel staff, which led to Tomasz fixing it all night, to result in one of the most impressive demos I (Pedro) saw at all CHIs, congrats Tomasz and Ed!

Workshops, Panels, Meet-Ups (as organizer)

One really exciting aspect of CHI is that it does not end with the papers and posters, but expands beyond with networking-based activities such as workshops, panels, and newly this year, the meetup track. Our group was involved in organizing activities in all of these three tracks!

Workshop: Body Transformation Experiences

In this workshop (led by Ana Tajadura-Jiménez and also featuring our alumni Jun Nishida, who's now a professor at Umaryland), we explored how the last decades of CHI have shown a growing interest in experiences that engage the moving and sensual body to alter one’s body perception. The way one’s body is perceived is highly plastic and can be altered through multisensory signals and feedback related to the body. The emerging developments in multisensory interfaces open opportunities to enrich, augment and transform body experiences in the real-world through the senses. This workshop focused on the theories, approaches, methods, and tools to design multisensory technology that elicit and support Body Transformation Experiences, and on how to best design these for and from a first-person, lived experience. We explored how to elicit and assess multisensory Body Transformation Experiences, and showcase concrete examples of supporting them with technology. Through technology presentations, panel sessions with experts, and multidisciplinary discussions, this workshop: (i) brought together researchers creating Body Transformation through sensory technology with those studying experiential effects of sensory-body interactions; (ii) mapped current methods, opportunities, and challenges in designing Body Transformation Experiences; and (iii) envisioned a road map for this field with future directions by fostering a multidisciplinary community, building collaborations, and inspiring innovative directions for design and research.

Our group was able to participate in the workshop in two ways: (1) Pedro was one of the organizers; and (2) Yun and Romain were participants, bringing their Embodied AI demo to the workshop to incite participants' feedback and trigger explorations on our key themes of the workshop.

Workshop: Cultivating Pedagogies for Post-Growth HCI

Our PhD student Jasmine Lu, who was busy in Chicago with her faculty job search, helped organize this workshop with a stellar cast of researchers (including Vishal Sharma, Hongjin Lin, Han Qiao, Asra Sakeen Wani, Christina Bremer, Philip Engelbutzeder, Christoph Becker, Neha Kumar, Rikke Hagensby Jensen, and Anupriya Tuli). This workshop created a space for educators and students to critically reflect on how HCI pedagogy might move beyond "bigger–and-faster" framings and toward practices of sufficiency, repair, and care. Through activities such as co-designing a living syllabus and reimagining evaluation criteria for student work, participants explored how education can itself function as an infrastructural practice for cultivating post-growth perspectives within HCI. In doing so, this workshop aims to foreground pedagogy as a vital site where post-growth commitments can take root, reorienting the content and practice of HCI toward cultivating socio-ecologically just futures.

Panel: Body Transformation Experiences

In this panel, Ana Tajadura-Jiménez brought together a interesting cast of speakers around the topic of body transformation experiences: Kristina Höök, Kristi Kuusk, myself (Pedro Lopes), and Mel Slater—HCI experts with distinct yet complementary perspectives to examine what "sustainability" means for transformative body technologies. Bridging empirical science, somaesthetic design, material innovation, and VR research, the panel explores four themes—Experience, Materiality, Everyday Integration, and Ethics/Politics—to articulate pathways for technologies that support meaningful, inclusive, ethically-grounded transformations of embodied experience. The panel was moderated by Elena Márquez Segura and Laia Turmo Vidal<./p>

Meet-Up: NeuroHCI

Finally, our lab (Pedro, Yun and Yudai) took an organizing role in the meetups program too (a track that was new to this CHI). Together with Amber Maimon and Iddo Wald (core organizers and leaders of this initiative) and also with Jamie A Ward, Max L Wilson, Kristina Höök, and Rainer Malaka, we created the NeuroCHI meetup. This meet-up brought together researchers and practitioners interested in the timely intersection of neuroscience and human–computer interaction (NeuroHCI). Advances in performance and accessibility of methods such as EEG, fNIRS, BCIs, and biosensing open new possibilities for design and interaction while also raising conceptual, technical, and ethical challenges. The session employed engaging, interactive activities to maximize dialog, including an exercise that invited participants to experience embodied approaches to interaction. Our goal is to catalyze interdisciplinary collaboration, strengthen and grow the NeuroHCI community, and identify promising directions for future research and practice. This meetup followed (in fact used a similar title) on our recent Shonan mini-conference (you can read about it here) and our CHI 2024 panel (organized by Pedro and Yudai, which you can re-watch here).

If you want to stay in touch with the NeuroCHI community, we have a slack channel, ask us to join it!

Our workshop participation

Workshop: Augmented Body Parts: Bridging VR Embodiment and Wearable Robotics

Participating authors from our group: Yun Ho and Romain Nith

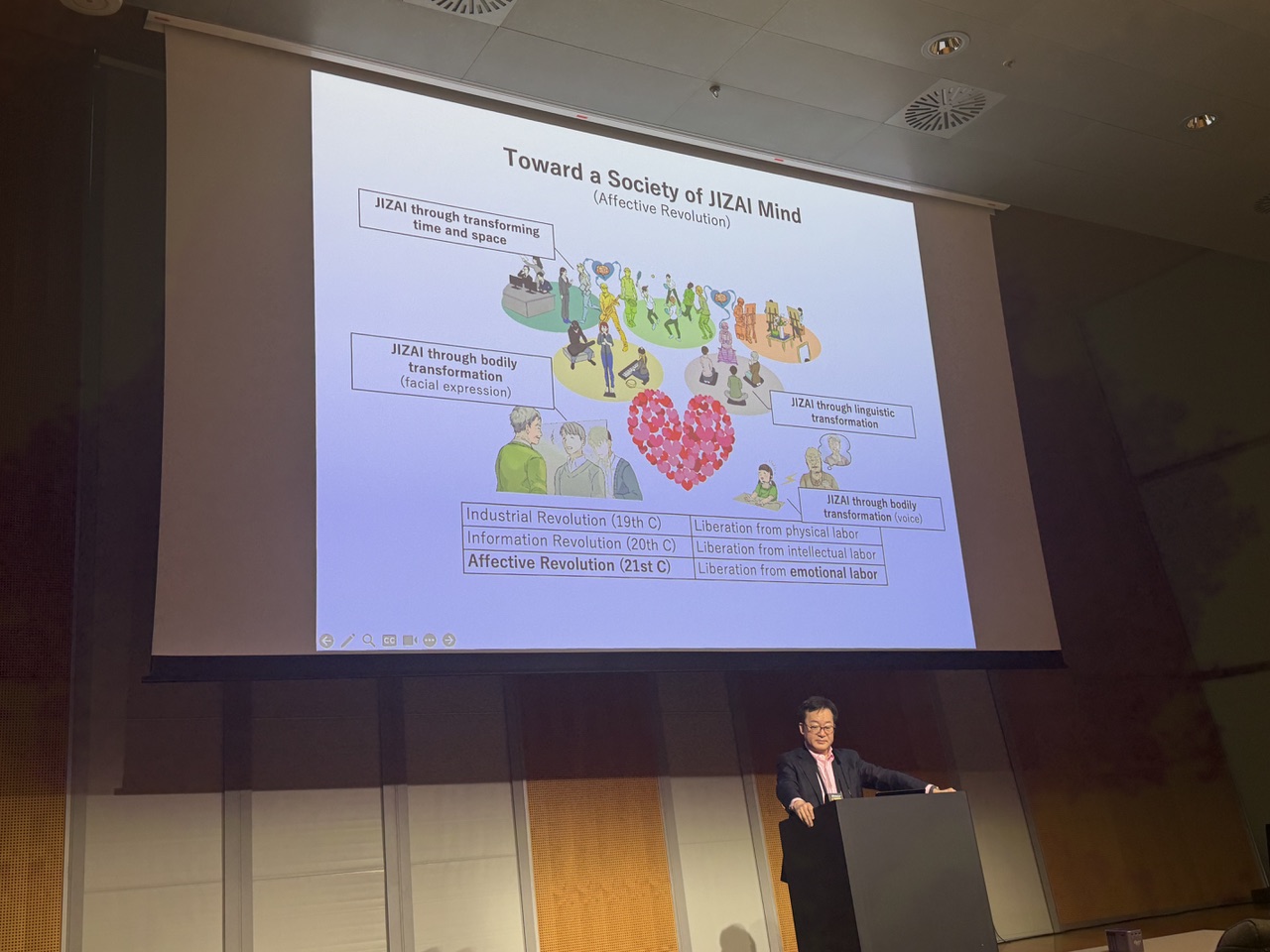

Our PhD students Romain Nith and Yun Ho participated in this workshop, which explored what kinds of augmented body parts could be added to the human body and what purposes they might serve. Through two rounds of small-group brainstorming — first on the types of body augmentations possible, then on their functions and goals — participants generated and presented a wide range of fun ideas to the full group. The workshop also featured a keynote by Prof. Masahiko Inami, who showcased over a decade of research in this space, spanning from supernumerary robotic limbs, telepresence robotics, and his vision towards JIZAI mind. Our former summer intern, Jerry, was also an SV in this session!

Workshop: AI for Haptics, Haptics for AI

Participating authors from our group: Yun Ho and Romain Nith

Our PhD students Romain Nith and Yun Ho presented our Embodied AI (CHI 26 full paper) work at this workshop, joining 13 other presenters for a series of lightning talks at the intersection of AI and haptics. Together, participants explored how AI methods can advance haptic design and rendering, and, conversely, how haptic feedback can enrich AI systems—all while discussing broader challenges, opportunities, and ethical considerations for this field.

Service

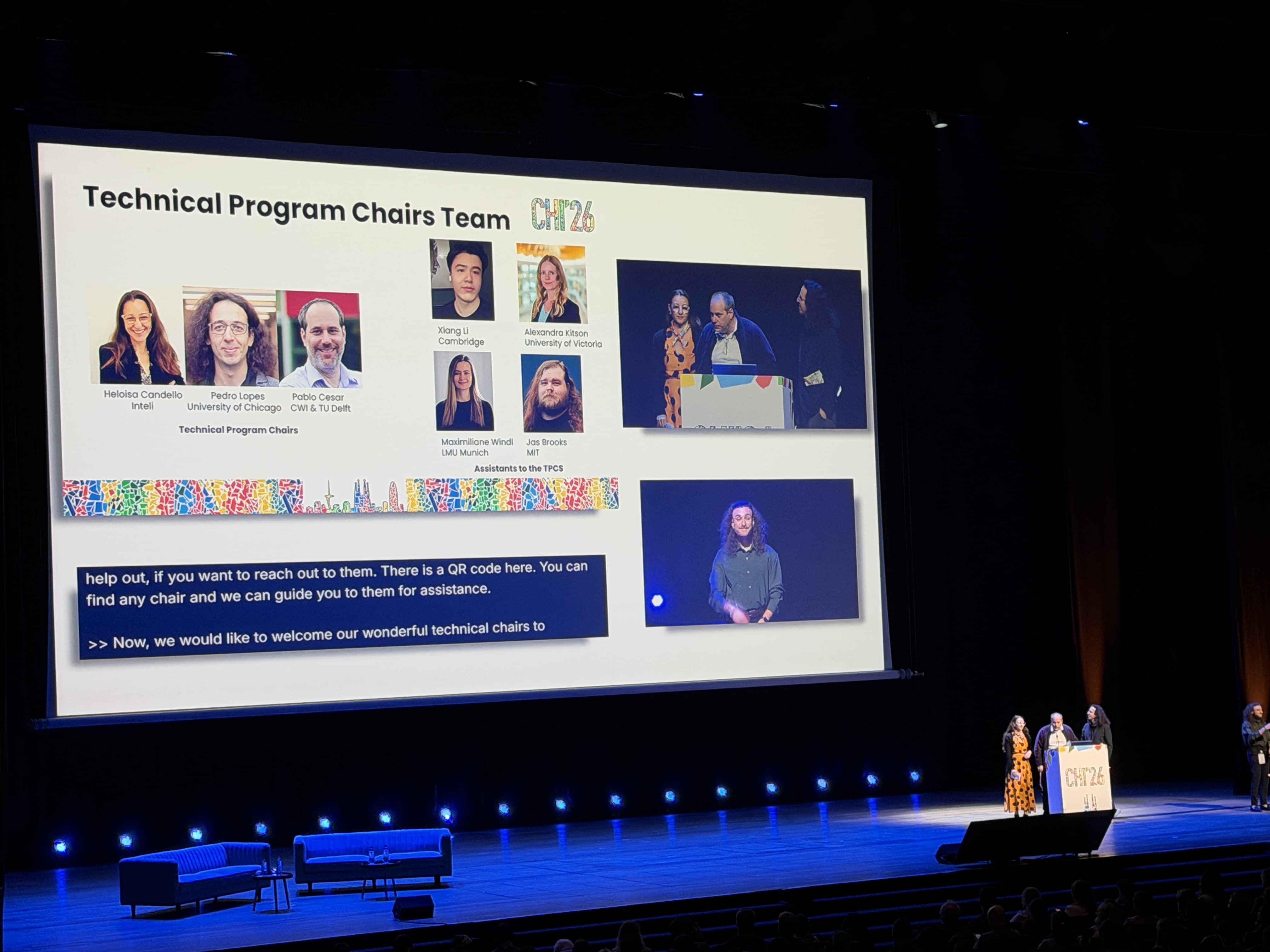

Pedro Lopes served as Technical Program Chair (TPC) alongside Heloisa Candello and Pablo Cesar. Together with General Chairs Nuria Oliver and Ayman Shamma, and almost a hundred other chairs and volunteers, we all formed the core of the CHI 2026 organization team (see the full team).

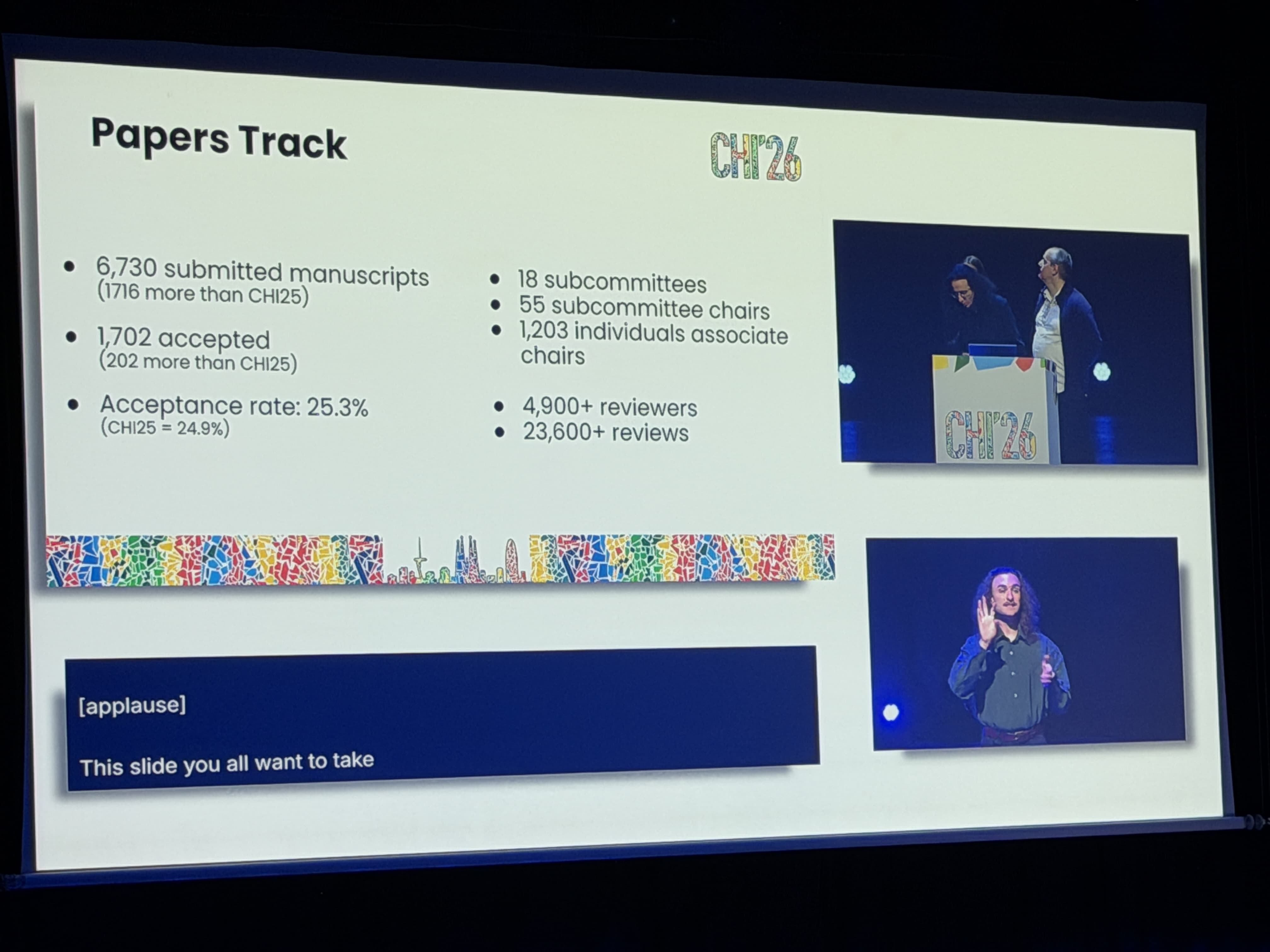

Also worthy of note in the role of Technical Program Chair (TPC) were the opening plenary, the really packed room shown below with over 4000 attendees excited to kickstart CHI 2026. In this opening ceremony, we were able to highlight the massive scale of this CHI (biggest in history), with over 6700 submissions to full papers (with ~1700 accepted papers)!

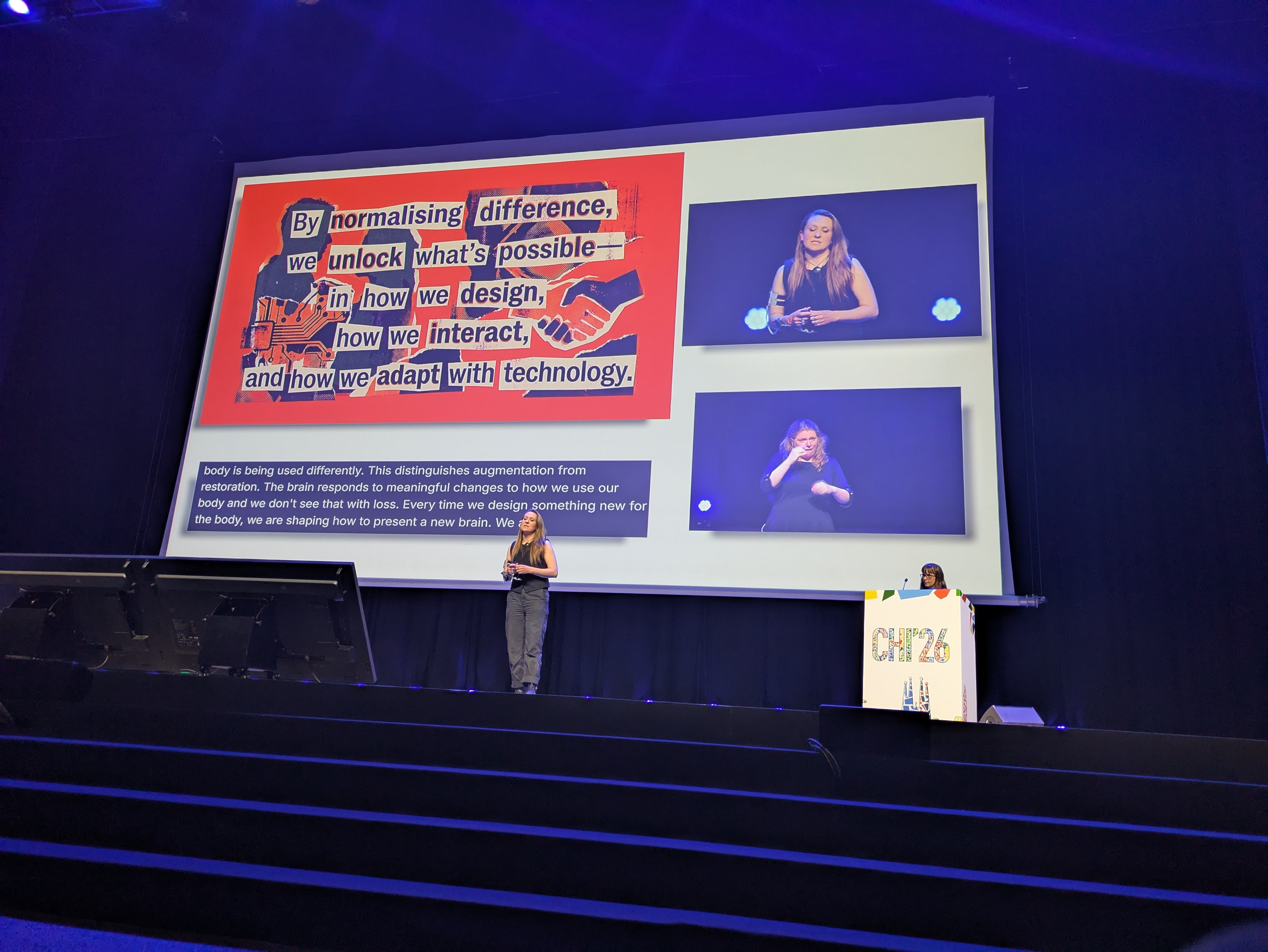

Finally, at the closing plenary, I (Pedro) got to be on stage for the Q/A with our keynote speakers Tamar Makin and Dani Clode (which were suggested as keynote speakers by me to the Keynote chairs).

Finally, the organizational work to pull off a conference with 5500+ in-person attendees and 1700+ papers merits its own essay, but suffice to say the conference was a success and we will miss our organizer team (especially our assistants Jas, Maxi, Xiang and Alex), with whom we spent almost two years (weekly and sometimes daily) planning this fantatsic event. You can see a video below that highlights how CHI 2026 felt like on the ground.

Closing Thoughts

This was a defining year for the lab—scientifically and collaboratively. With me (Pedro) focused on helping to run the conference, the lab came together in a remarkable way: supporting each other, stepping up in critical moments, and delivering across all fronts!

Intellectually, in our work at CHI 2026, our most deeply shared theme emerged: computing that operates through the body. We hope to see you at CHI 2027 (and we wish the 2027 organizational team the best of luck with this huge endeavour and thank them for their service to CHI).

p.s.: want to watch all our CHI 2026 videos in one playlist? See below!